Koala & Joey

Aged care sucks. User focused design project to optimise the aged care industry and promote active aging.

1. Brief & Objective

Koala and Joey was the outcome of Interface Design Studio, a formative subject of the Bachelor of Computing Design at the University of Sydney. We were tasked with devloping a solution, in a problem area of our choice, employing autonomous vehicles and some form of digital interface.

With AVs poised to reshape society we wanted to find an application for autonomous vehicles to solve a societal problem.

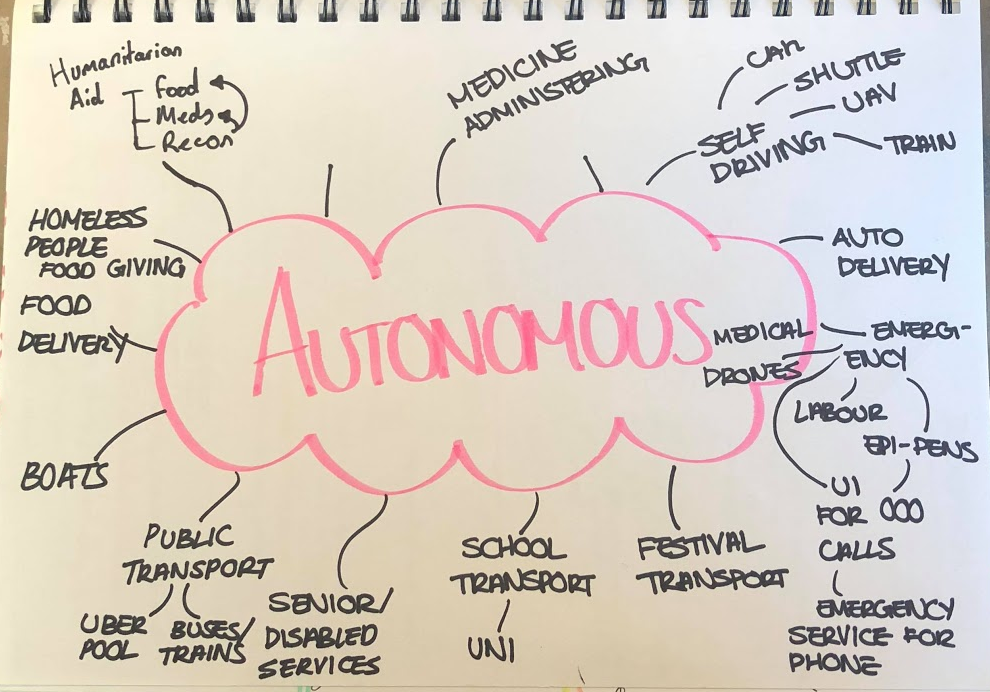

Ideas exploration

After exploring autonomous vehicles’ application to disaster releif, public transport and humanitarian services we decided to focus on solving problems faced by senior citizens. With an aging population and an archaic, insufficient aged care system, we beleived this topic had the greatest potential for an effective solution.

2. So, what sucks when you’re old?

As a team we conducted 6 semi-structured interviews with senior citizens at a local retirement village. On top of notes taken during the interview, the recordings were transcribed for later analysis. This was followed by a further 8 observational studies on the way senior citizens use public transport.

We analysed the records in an affinity diagram finding a range of issues from hearing loss to loneliness to money anxieties. However, two discoveries stood out most to me.

- Senior citizens struggled most with the short and infrequent tasks, such as shopping and taking the bins out.

- Senior citizens spoke about aged care as something they’d regretfully have to submit to.

Which got me thinking, how can we use autonomous vehicles to address the first point and prolong the time before the second becomes necessity.

3. How can we make it suck less

Because loneliness was by far the most prevalent issue we recorded, we wanted to use autonomous vehicles in a way that promotes human to human interaction.

With these two concepts in mind, we landed on the idea of using autonomous vehicles to promote short bouts of volunteering in those key areas.

Our goal was to improve the livelihood of the elderly, but now how can we benefit the volunteers?

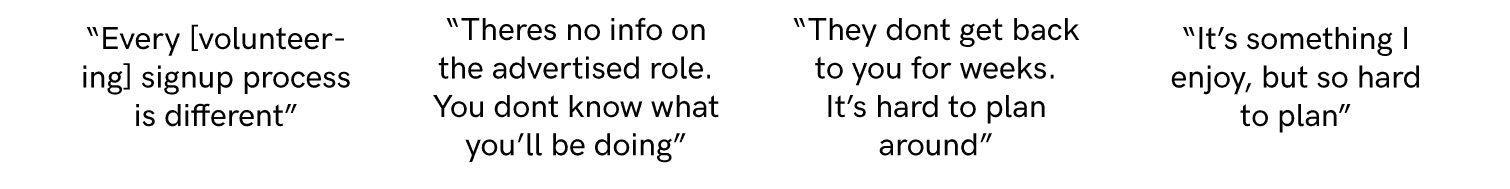

To understand volunteers goals, needs and fears we conducted a focus group with 6 students who had experience volunteering in different sectors. We heard

If we could provide a better volunteering experience that results in much needed support being provided to senior citizens without aged care plans then we had a concept.

Because of variations in the way each demographic uses (and owns) technology, we identified we’d need two UIs. My group member, Soomin, was to develop a smartphone app interface for volunteers and I a tablet interface for senior citizens.

4. Early prototypes and testing

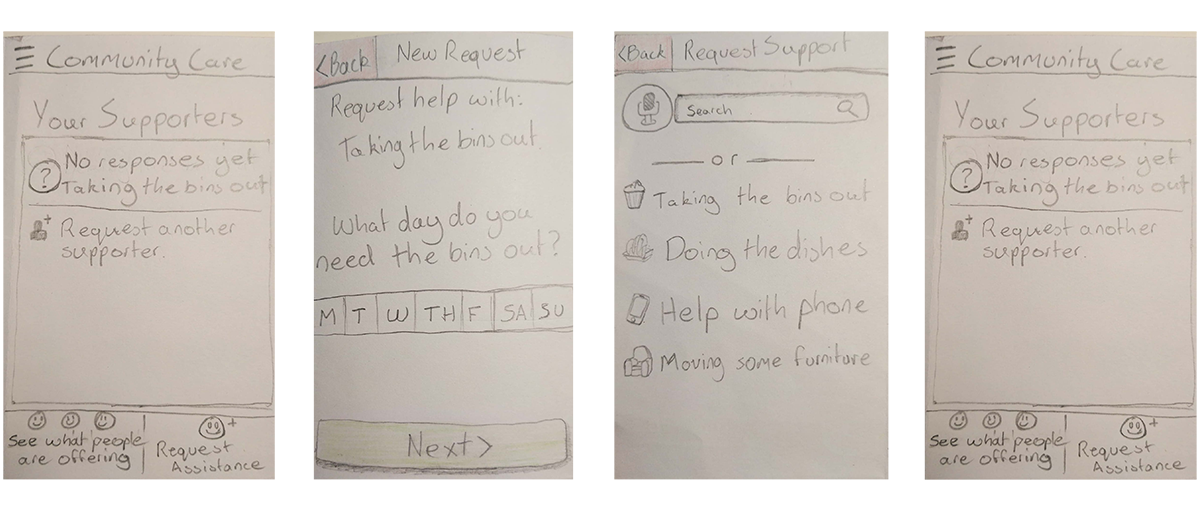

I developed a number of pen and paper wireframes that, with feedback from my tutor and Soomin evolved into a lofi prototype.

Low fidelity wireframes showing the app flow.

These prototypes were made interactive using Pop before being taken back to the retirement village and tested on 4 sydney siding senior citizens.

Here’s what we found:

- Text input is fiddly and tedious, instead opt for valuable but generic defaults

- Reduce the amount of interactive elements, senior citizens are not as used to parallel processing of text as us.

- Additionally, a linear ui flow reduces information overload.

- With eyesight degredation, large bold text is key for legibility.

- Provide context to the users actions and inform them of what will happen next, even if it means long instructive text.

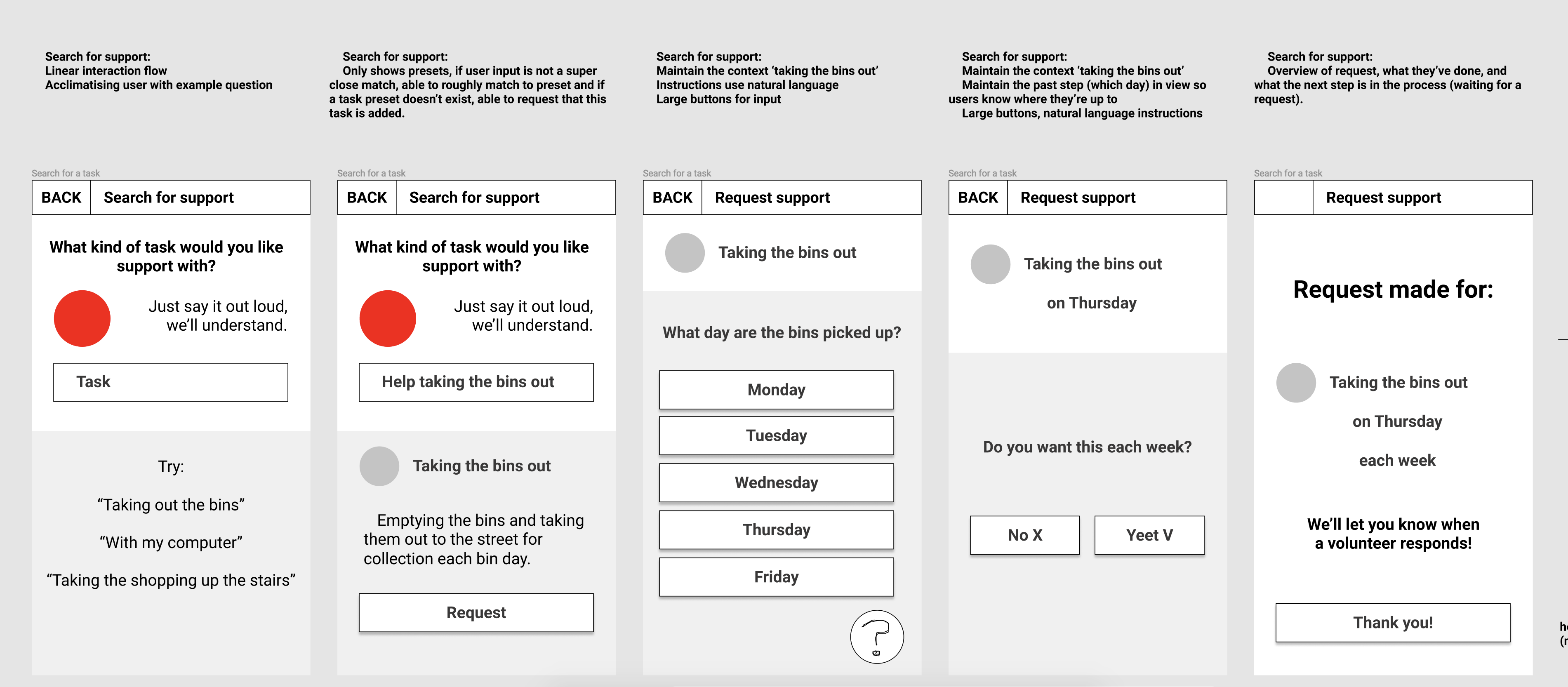

5. Hi fidelity wireframes

Low fidelity wireframes showing the app flow.

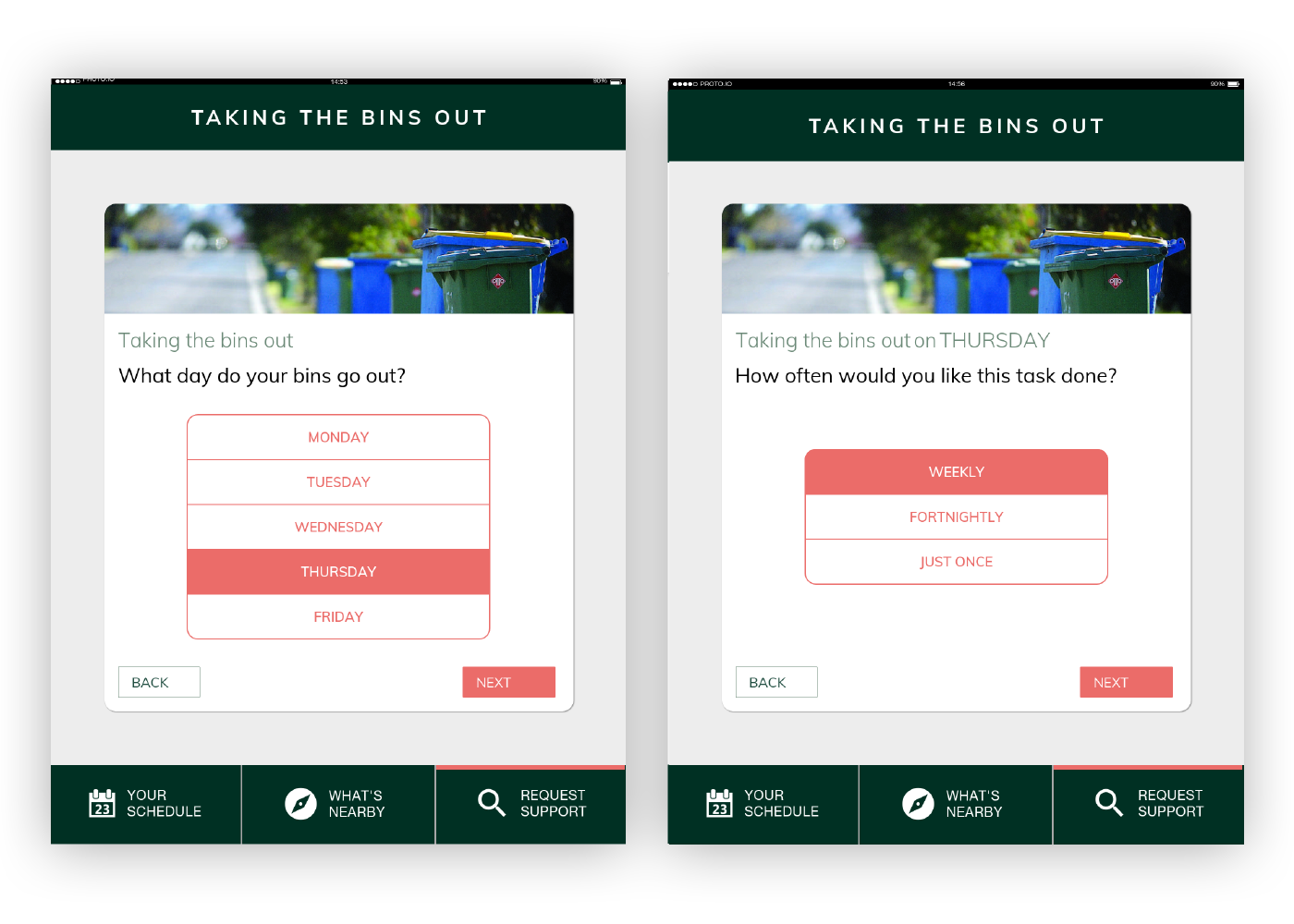

These insights were realised by breaking each tasks into a ‘quantified ui’, where each screen has a single purpose; using voice input to provide alternate inputs for text boxes and adding context to each screen of how complete the task is.

6. Final Product

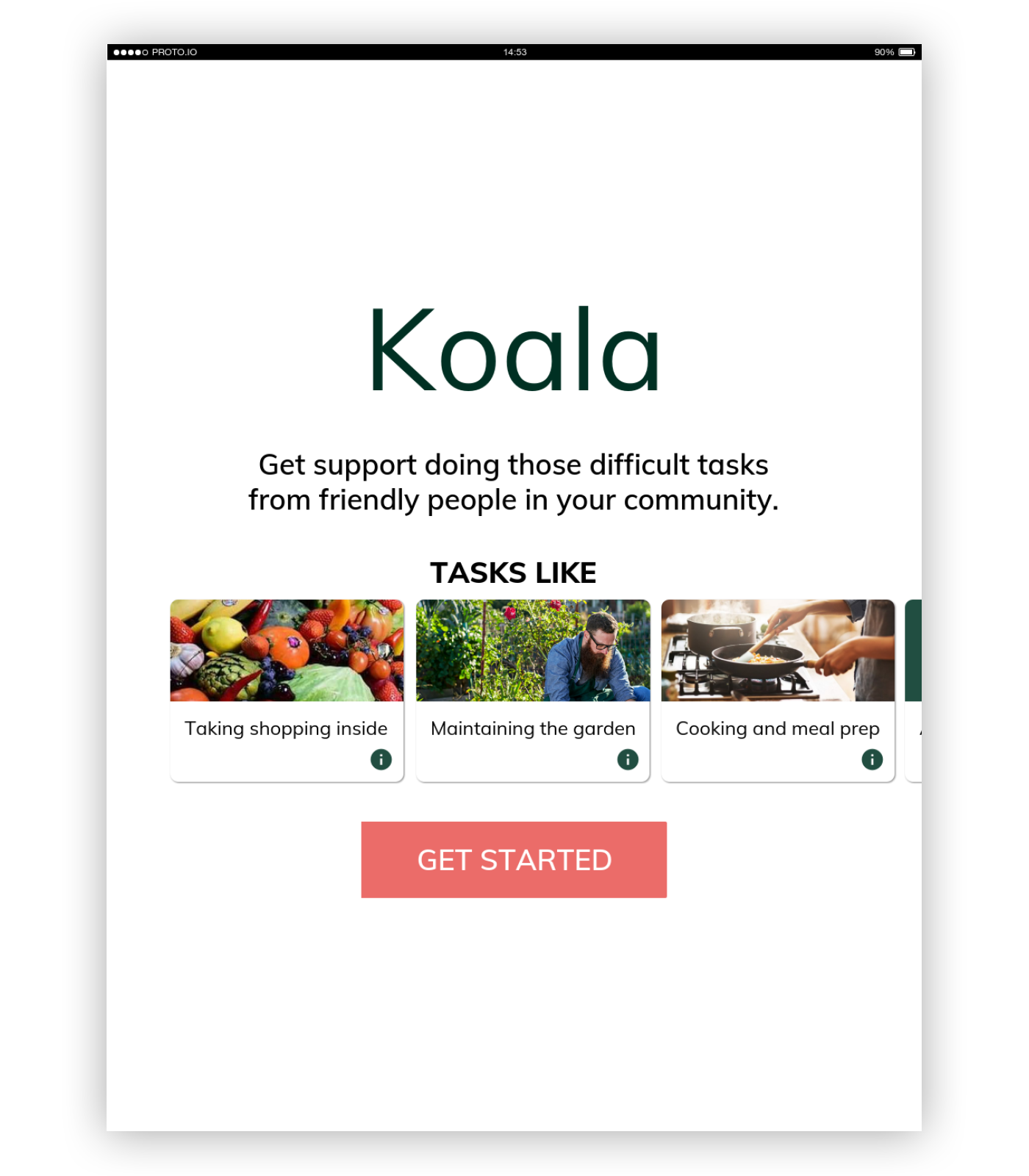

Login/signup page showing users the potential tasks they can receive help with

Image of app homepage showing potential tasks that can be completed.

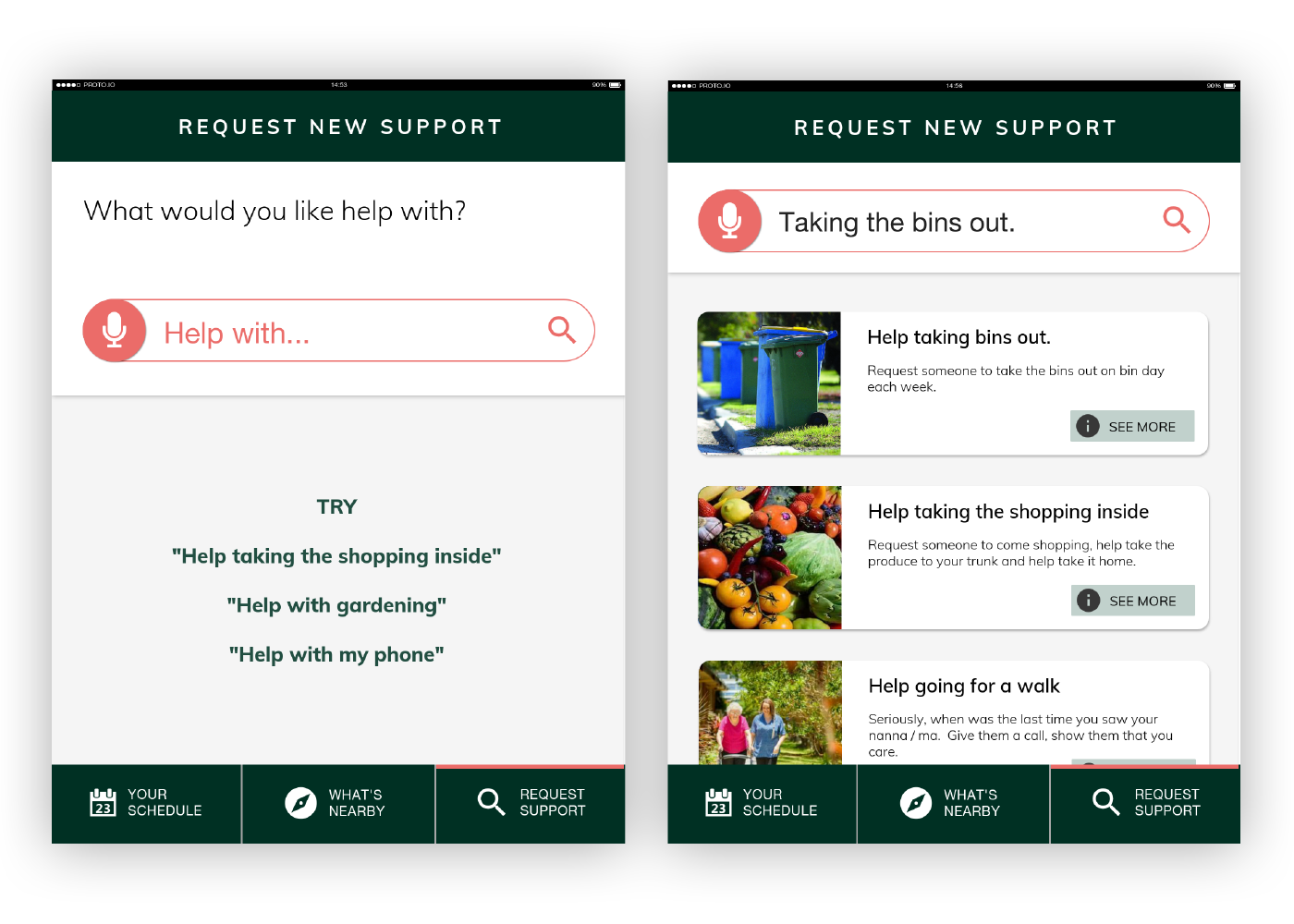

Task search page, showing voice recogniton capabilities and the list UI

Image of task search showing the voice recognition capabilities, suited to those with lower dexterity.

Task request page showing how users can specify their needs with tasks they need support with

Image of task planning flow showing how users can enter their needs as a per task basis.

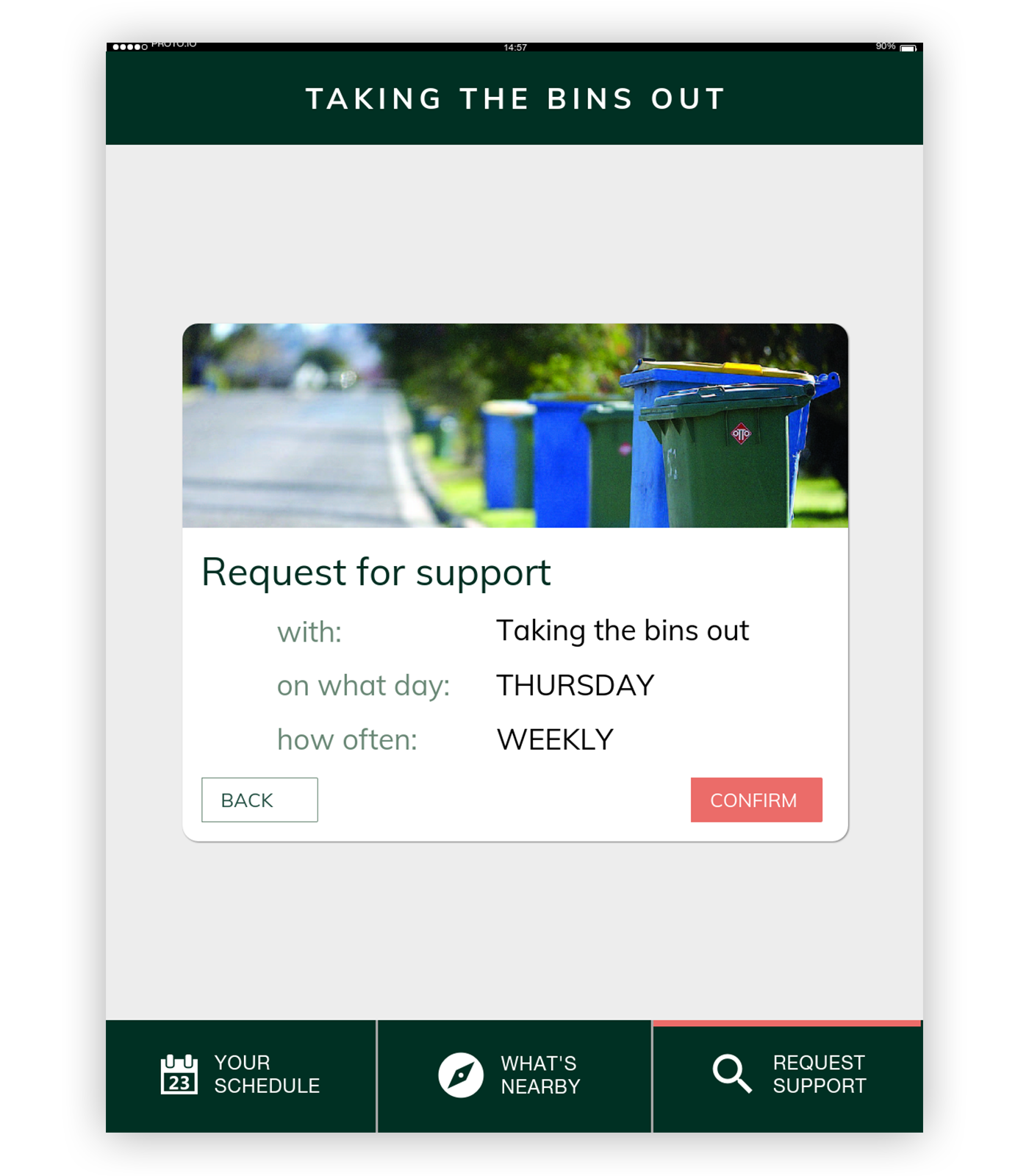

Confirmation page allowing users to double check the information they’ve entered is correct

Confirmation screen showing all the information the user has entered so they can double check that it's correct.